I want to give you a simple perspective on wading through the complexities of life, a metacognitive framework.

But before we get to any of the fun stuff, we need to take a short, mathematical diversion. Don’t worry, no equations were harmed in the writing of this essay. Ok, fine, I used one but the only thing you need to understand about that equation is that it’s short.

We need to establish precisely what we mean by simple and complex. If we don’t we’ll get stuck in semantics. Nailing things down precisely and building on them is huge. It’s the foundation in the house of ideas we will explore today and is a large force for how software is eating the world.

Ok, here we go. Math ahead (that’s bad). But there’s also a gif and a Simpsons picture (that’s good!).

A Simple Definition of Complexity

We all have decent intuition about whether something is simple or complex. “aaaaaaaaaaaaaaaaaa” is less complex than “98734hjskjhw”. The first is just 18 a’s. The second you have to spell out in detail.

A rough take on how to measure simplicity is: “how long does it take to explain to a stranger?”. You could tell them “‘a’ 18 times” or you could spell out, in excruciating detail, “nine eight seven three four….” and so on.

“’a’ two billion times” is about as simple as “’a’ ten times”. But imagine how long it would take to describe two billion random letters! Monkeys typing on keyboards. Forever.

That’s all well and good for intuition, but until 1963 we didn’t have a formal, rigorous way to talk about it. We couldn’t talk about it like mathematicians. This is important, because we couldn’t build precise new ideas on it. More on that later.

So what happened in 1963? Two people named Kolmogorov and Chaitin took the idea of “how long does it take to describe something?” and made it all math-y and rigorous, . The short version is: Something is as simple as its shortest possible description.

“A billion ‘a’s”, as a description, is far, far shorter than “aaaaaaaaaaaaaaaaaaaaaaaaaa…..”. So the complexity of a billion a’s is about 10, the number of letters you need to uniquely describe a billion a’s.

Kolmogorov and Chaitin used this description to come up with a bunch of interesting conclusions. These conclusions are sometimes counterintuitive, but since they were mathematically precise, we know they are true, so we can use them and not worry too much that we’re wrong.

They proved that you can’t come up with a way to compute this for any random thing. You can’t take a random string of letters, plug it into some machine, and guarantee that the machine spits out the shortest description.

There’s another thing they found, which is kind of troubling, and it’s the foundation I built this essay on: There are very few simple things. The number of things with short descriptions is a tiny speck compared to the number of things with long, complex descriptions.

For all practical purposes, nothing is simple: The number of simple things is nothing compared to what is complex. And we are mathematically sure of this. But how? The formal hand-wavey term is something like “combinatorial explosion”, which is just a fancy way of saying “You can write SO MANY MORE long descriptions than short descriptions.”. So, so many more. Exponentially more.

How does this work?

Imagine a toy language with just ten simple words, like “hot”, “blue”, “mad”, etc. If you only use one word, there are a grand total of ten things you can describe. Just ten. No more.

Now you can use two words. How many things can you now describe? “hot blue”, “mad hot”, and so on. There are 100. Ten words times ten words. Some of them may not be as clear as others, e.g. what does “blue mad” mean? But it’s a different thing than “mad blue”, so it adds to the list.

With three words? A thousand: 10 times 10 times 10. Four words? Ten thousand. Five? 100,000. It gets out of hand really fast. There are exponentially more complex things than simple things.

Life and all its complexity gets really out of hand, really fast.

But we love simple truths. They’re easy to remember. They give us the feeling that we understand something deeply, but without all the complexity.

Spending days, months, or years mucking through a complex life can be exhausting: Serving on a jury, raising a child, building a company. At some point slogging through all this complexity, you discover some shorter way of explaining everything you went through. We call them insights or aphorisms.

It’s like going from something that appears complex, like this…

And then realizing, like Benoit Mandelbrot did, that all you need to make that beautiful depth is one short equation:

You don’t have to understand the equation, just notice that it’s small.

Most truths are complex, but the best things I learn are simple insights that unlock entire worlds of understanding. The best things I learn are like Mandelbrot’s short equations that generate immense, complex, emergent complexity and beauty (stare at that gif for a while).

But Kolmogorov and Chaitin proved that these simple things are so incredibly rare. Proved. Like, we are for sure certain of this.

So why do I feel like everything I learn is simple? The answer is simple (ha): we don’t have that much space in our heads. We evolved brains that notice patterns, remember patterns, and apply those patterns. It’s way more efficient than a giant lookup table.

We use technology for those giant lookup tables. We have ever since we invented writing. The great breakthrough with writing was that we could store all these complex truths that came out of our heads and not have to remember them all. James Joyce wrote down Ulysses. He didn’t have to memorize it and pass it down like The Odyssey. Big win for our brains.

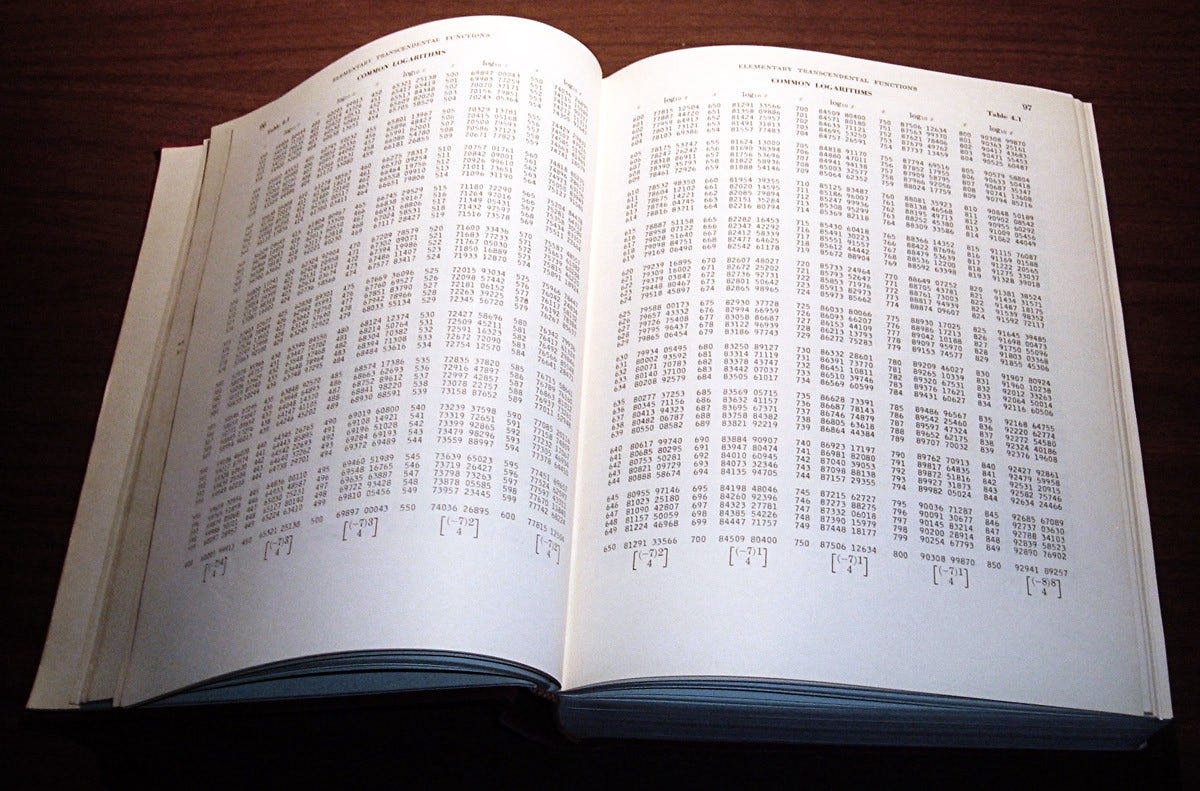

In the days before calculators and computers, humanity made a bunch of lookup tables precisely because we had to offload the work. Our heads can understand logarithms pretty well. We could understand how to calculate logarithms, but the number of logarithms in the world were literally infinite. So we wrote them down outside our heads. In log books:

It’s a Log Book! Ha! A Math Pun! I REGRET NOTHING!

“So What?”, You Ask. Good Question.

It’s more useful to have two things: Simple truths and ways of applying those truths. This shouldn’t shock you. It’s supposed to be obvious. What Kolmogorov showed us is precisely how many more truths there are than dreamt of in our philosophy. Exponentially more. So many more.

I admit, it’s all pretty abstract. The short answer: Use thinking (even software?) to solve the complexity of your life based in simple truths. Elon Musk calls this Reasoning from First Principles. This is thinking forwards, taking our simple truths and seeing where they take us:

But you have to get the simple truths right. And you have to get their application right, too. In fact, the very reason we needed a mathematically precise definition of complexity was so Kolmogorov and Chaitin could do something with it and get their conclusions right. Your simple truths are the foundation upon which you build the house of your mind’s interactions with the world. And their applications are the joints, struts, and epoxy that hold the house together. There’s no house without both.

You have to update and evolve your simple truths, just as you keep repairing your house when you find cracks and install new heating.

How do You Even Learn Simple Truths?

Paradoxically, we humans seem to learn simple truths from complex truths. Math-y people like to call that abstraction, it’s also called pattern-matching. You have to slog through a life of complex truths to see the underlying, simple patterns. Some people have done that already and are happy to share with you what they’ve found. We usually call that teaching.

(Math aside: pouring over complexity and seeing some simple pattern underneath is kinda like prime factoring a large complex number.)

So I’m Supposed to Learn Patterns? Thanks. Big insight, there.

Yeah, this essay may not change your life, but it might change your outlook. Fill your space-limited brain with simple truths, how to notice patterns, and how to apply those patterns (be foxy). This is thinking backwards, wading through complexity and backing up to see patterns and extract truths.

You have limited headspace, and can’t remember all that complexity you just waded through, so you forget some of the complexity and remember the simple parts. That’s fine, even good. You can use writing to store the rest.

Or even better, use software to generate the rest. It doesn’t just store the what of a complex truth, it stores the how of complex truths. That is software’s greatest power. You don’t need that enormous log book when just a snippet of code will compute the answer.

How and Where Does This Happen in the Real World?

Math is the purest example of building off simple truths, but software is the great practical example of precise truths built on top of each other. All software you see is layers upon layers upon layers. We’ve built so many layers that we’ve gone all the way up to the clouds. Sure, sometimes the software layers aren’t as solid as they should be, but for the most part software’s abstractions allow for staggering growth.

Much as the simple truths you hold evolve over time, the right level of software abstraction is always evolving. In software (warning: oversimplification) we started with functions, then moved up to libraries, then programs, and now we’re experimenting with combined programs/operating-systems (containers).

Something Kevin Kwok has been thinking about is where these layered abstractions might also apply? Where else besides math and computers can you build layers and layers of abstractions? I’ll speculate two possibilities:

The industrial bureaucracy comes to mind, with people performing precise duties so their superiors would (ideally) not worry about the layers below them. Humans are both less predictable and more adaptable than machines, though, so the analogy breaks down. Engineers love and need their abstraction tools. We generally hate middle-management.

The Law seems ripe for abstraction, but my (very limited) outside perspective seems like there is no abstraction at all, but a complicated interwoven network of precedence. If anyone with legal expertise can comment, I’d love it.

So keep powering through the complexity of life. Think backwards to abstract your simple truths. Test your simple truths early and often. Define your truths precisely enough to think forwards into novel conclusions; There are far more than you might expect.